Answers to common contentCrawler questions | FAQs

Have you got questions about contentCrawler? Maybe you aren’t sure what makes it different from other OCR software or want to know which file types it supports? To help bring you the information you need, we asked our team of solution experts to answer the most commonly-asked questions about contentCrawler.

Frequently Asked Questions

Q1. What kinds of processing services does contentCrawler offer?

Q2. How does contentCrawler make my files text-searchable?

Q3. Why can’t I just use the in-built OCR function in my Multi-Function Device?

Q4. Will contentCrawler require our staff to do anything different with their files?

Q5. Can I OCR all legacy and newly-profiled documents together?

Q6. Should contentCrawler be run on a dedicated Server?

Q7. What graphics files can be made text-searchable?

Q8. Can I process TIFF images in one way and PDFs in another?

Q9. What does contentCrawler do with secured PDFs?

Q10. How long does it take for contentCrawler to OCR documents?

Q11. I already own an OCR product – can contentCrawler utilize this?

Q12. Is compression used for text and image content in documents?

Q13. How much compression can I expect to gain on documents?

Q1: What kinds of processing services does contentCrawler offer?

contentCrawler assesses documents for bulk Optical Character Recognition (OCR) and Compression processing.

OCR processing: contentCrawler converts image-based documents in a Windows File System, document management system, or another repository to text-searchable PDFs. It saves them back as new or replacement documents ready to be indexed and found by search technology.

Compression processing: The Compression module can apply compression and downsampling to all PDFs, reducing file size and associated storage costs.

Q2: How does contentCrawler make my files text-searchable?

contentCrawler uses OCR technology to add an invisible text layer to non-text-searchable files, which is needed for enterprise search and indexing technology. For graphic files such as JPG, PNG, BMP, and TIFF, contentCrawler will first convert them to image-based PDFs before adding the invisible text layer to the converted PDF file.

Q3: Why can’t I just use the in-built OCR function in my Multi-Function Device?

Setting your scanners or Multi-Function Devices (MFDs) to OCR scanned documents by default isn’t enough to stop non-searchable files ending up in your systems. A scanner, for example, won’t intercept documents ingested during mergers and acquisitions. Additionally, to process a backlog of files using an MFD would require someone to print and scan thousands or even hundreds of thousands of legacy files.

Q4: Will contentCrawler require our staff to do anything different with their files?

contentCrawler is an end-to-end automated solution that runs 24/7 without staff intervention. Staff do not have to worry about OCR or Compression processes or workflows. Instead, contentCrawler works in Backlog mode for legacy documents and Active Monitoring for recently-profiled documents.

Q5: Can I OCR all legacy and newly-profiled documents together?

Typically, you would create and have two processes running:

1. A Backlog process that works through your legacy documents

2. An Active Monitoring process that looks for newly-profiled documents

Both can run at the same time. The processing of documents is carried out in modified date order, with newer documents processed first. This ensures that Active Monitoring is given a higher priority over Backlog processing.

Q6: Should contentCrawler be run on a dedicated Server?

Yes – it is advisable to run the process on a dedicated computer (Windows Server 2012 R2 is recommended), to be used expressly for this purpose, particularly when processing your legacy documents. This avoids interference from other software products and provides higher throughput. This can be in a virtual machine environment as well (VMware, etc.).

Q7: What graphics files can be made text-searchable?

Image types supported are TIFF (single and multi-page), BMP, GIF, EPS, JPG, and PNG. All graphic images are converted to a PDF page size equivalent in size and proportion to the graphic image.

Q8: Can I process TIFF images in one way and PDFs in another?

Yes – you can set up two separate services: one looking specifically for PDFs with actions you want to perform, and one processing TIFF images. Multiple services can run simultaneously, so it is no problem to have this configuration.

Q9: What does contentCrawler do with secured PDFs?

contentCrawler adheres to all security settings and won’t create a copy of the PDF with added text layer unless the security settings allow for this. The newly-created version of the PDF inherits all the same PDF security settings as the original if allowed.

If the security settings on a PDF prevent it from opening, the Assess process flags it as ‘unable to assess’ or ‘unable to process’ for those that contentCrawler could not read or update with OCR text.

Q10: How long does it take for contentCrawler to OCR documents?

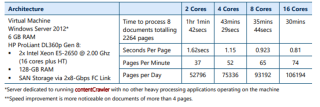

Based on processing documents in a Windows folder structure, speeds taken for contentCrawler to OCR them including Search and Assess stages, and saving them, are listed below according to the number of processing cores and the following architecture:

Q11: I already own an OCR product – can contentCrawler utilize this?

contentCrawler is a fully self-contained application so that it can optimize its speed and accuracy for the task at hand. For this reason, you are unable to use any other existing OCR technology, whether that is pdfDocs OCR Server or any other third-party OCR software product.

Q12: Is compression used for text and image content in documents?

Compression is only possible on documents containing image content – as the process resizes and/or resamples images contained in the PDF.

Q13: How much compression can I expect to gain on documents?

An image or full image-based PDF document, e.g., a scanned PDF, will gain around a 50% reduction based on default contentCrawler Compression settings. However, a number of factors can play a part in how a document compresses:

- Greater compression is gained if the original image or PDF has very high DPI (Dots Per Inch)

- Lesser compression may occur if OCR is performed where the original file size is small, as OCR adds to the overall file size due to the addition of font dictionaries

- Lesser compression occurs if the original file already has JPEG2000 or JBIG2 compression or MRC compression

- Lesser compression occurs if the original PDF contains a mixture of text and image pages